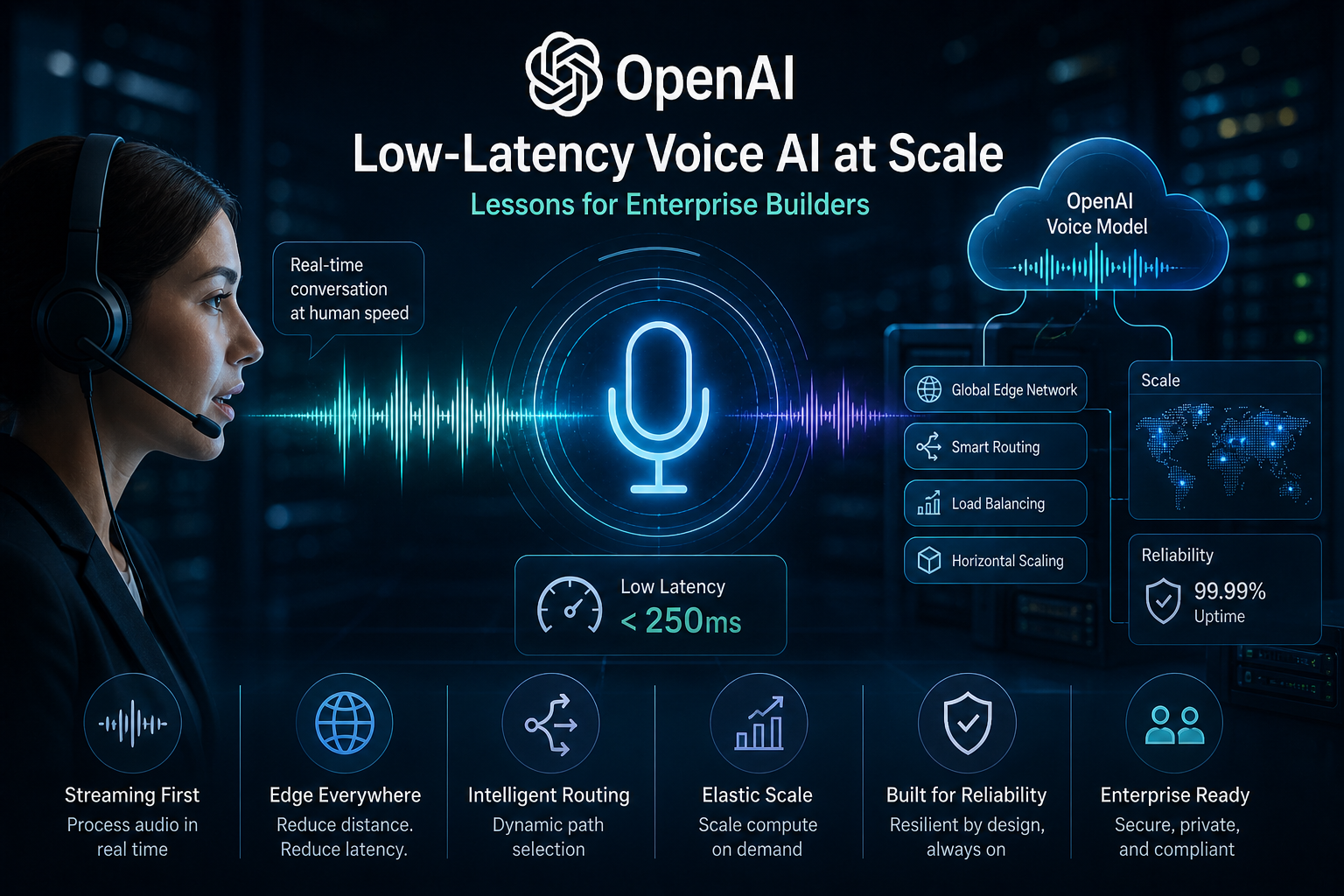

How OpenAI Delivers Low-Latency Voice AI at Scale: Lessons for Enterprise Builders

Intro Voice AI is no longer just a novelty—it’s becoming a core part of enterprise applications, from customer service bots to real-time collaboration tools. OpenAI’s recent engineering deep dive on delivering low-latency voice AI at scale reveals the infrastructure work needed to make these systems feel natural. As someone who’s seen voice projects stall on latency issues, this is a must-read for anyone building or scaling AI-driven interactions. What happened On May 4, 2026, OpenAI published a blog post detailing how they achieve sub-300ms response times for voice AI, even at massive scale. They rearchitected their WebRTC stack to handle global routing, stateful sessions, and efficient packet handling. Key innovations include a split relay architecture, native speech-to-speech models that bypass traditional STT-LLM-TTS pipelines, and advanced voice activity detection for natural turn-taking. This powers their Realtime API, enabling seamless voice interactions without the awkward pauses that plague many systems. ...