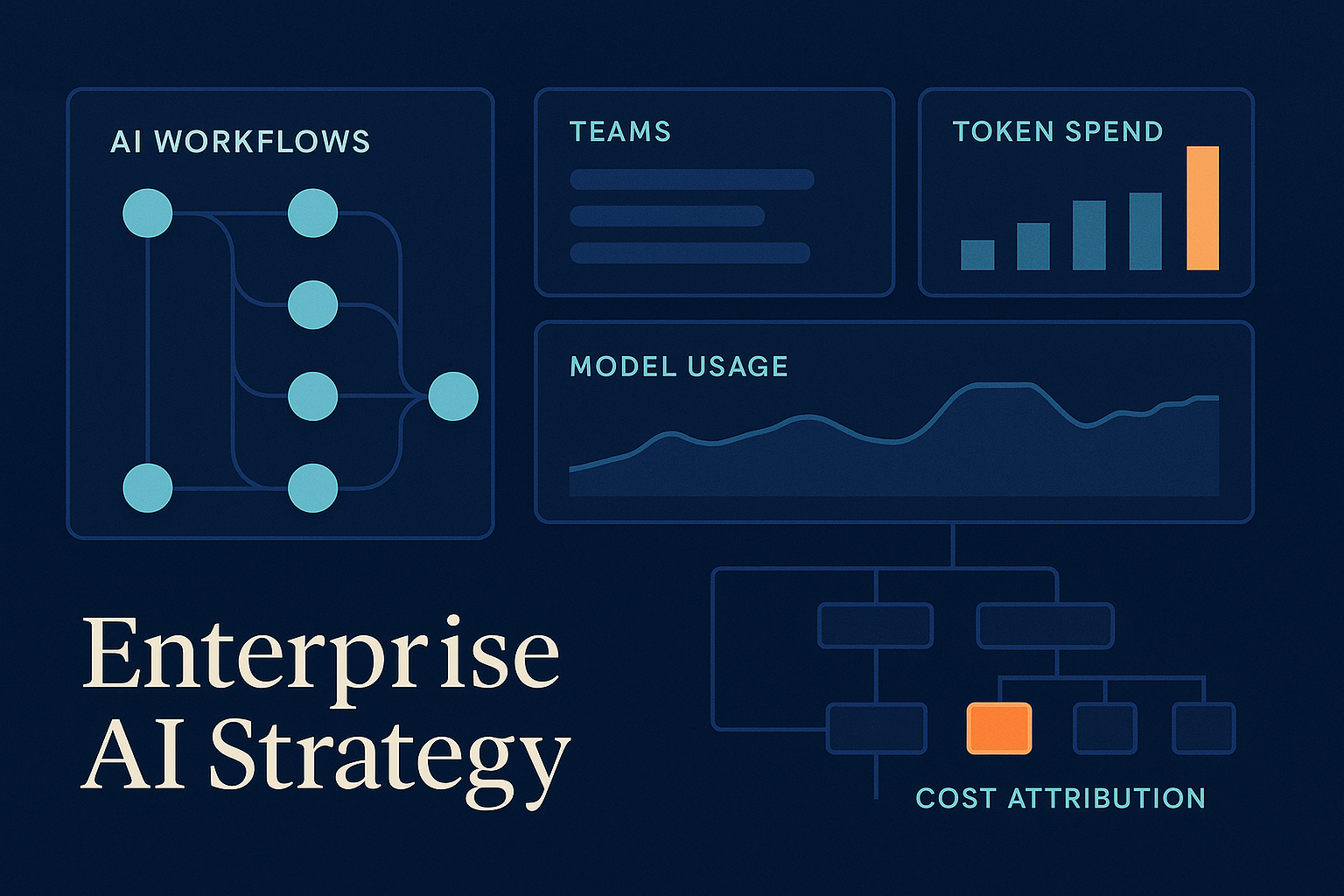

Amazon Bedrock adding granular cost attribution for inference is more important than it might look at first glance.

On paper, it is an AWS billing feature. In practice, it is a signal that enterprise AI is moving into a phase where who spent what, on which workflow, using which model, is no longer a nice-to-have.

That matters because a lot of AI teams are still treating cost visibility like a finance clean-up task for later. It is not. Once model usage spreads across copilots, internal tools, automated workflows, and agents, weak attribution becomes an architecture problem.

What changed

AWS says Bedrock can now attribute inference cost automatically to the IAM principal making the call, whether that is a user, an application role, or a federated identity. With optional cost allocation tags, teams can also roll spend up by project, team, or other custom dimensions inside AWS billing and reporting.

That is useful on its own, especially for chargebacks and cost analysis. But the bigger point is what it enables.

If you can see cost by caller, by model, and by token direction, you can start asking better operational questions:

- Which teams are driving spend?

- Which workflows are actually worth the cost?

- Who is using a premium model where a cheaper one would do?

- Which agents are generating too much output for the value they create?

- Where is experimentation healthy, and where is it just leakage?

Those are not finance questions alone. They are product, platform, and governance questions.

Why this matters now

A year ago, many organisations were still in demo mode.

Now the pattern is different. AI is being wired into real work: coding, support, document processing, internal search, analysis, workflow automation, and increasingly agent-based systems that can generate a lot of traffic very quickly.

That changes the economics.

With traditional software, marginal usage costs are often predictable enough to hide inside a broader platform budget. With LLM systems, usage costs can change fast based on model choice, prompt design, output length, retries, orchestration patterns, and how much autonomy you give the system.

In other words, architecture choices are now cost choices in a very direct way.

If you let ten teams build AI workflows without clear attribution, you do not just lose reporting quality. You lose the ability to govern the estate sensibly.

What most teams are missing

A lot of organisations still think AI cost control means negotiating a better vendor price or setting a budget alert.

That is too shallow.

The harder problem is tying cost to accountability.

If all your traffic is hidden behind one shared gateway, one shared service account, or one generic platform bucket, you may know total spend but still not know which product, team, customer, or workflow is responsible for it.

That creates three common failures.

1. The expensive workflow stays invisible

A badly designed agent loop, an overpowered model selection, or a workflow that generates far too much output can sit in production longer than it should because nobody owns the signal clearly enough.

2. Useful experimentation gets penalised with bad governance

When leaders cannot tell productive usage from waste, they often respond with blunt controls. That usually slows down the good work along with the bad.

3. Platform teams become the cost sink

Without proper attribution, the central AI or platform team ends up carrying spend that really belongs to business units or product teams. That makes internal ROI conversations messier than they need to be.

The better way to think about it

Treat cost attribution as part of the control plane for enterprise AI.

That means designing for visibility at the same time as you design for security, quality, and scale.

In practice, that usually means a few things.

Give workflows distinct identities

If every service, agent, or application calls the model layer through the same identity, you are throwing away operational clarity.

Distinct identities, roles, or service accounts make it much easier to understand spend and apply targeted controls later.

Tag for the business view, not just the cloud view

Technical attribution is helpful, but business attribution is where the real value sits.

Map usage to dimensions like:

- team

- product

- cost centre

- environment

- customer segment

- workflow or agent name

That is what turns raw billing into something leaders can act on.

Review model choice like an architectural dependency

Many teams still under-govern model selection.

If a workflow can deliver acceptable outcomes with a cheaper model, that should be a deliberate decision, not an accident. The same goes for output limits, caching, prompt discipline, and when to use deterministic software instead of another model call.

Separate experimentation from scaled production

You want teams to explore. You do not want prototype economics silently becoming production economics.

Different attribution, budgets, and review rules for sandbox work versus scaled workflows help a lot here.

Make agent costs observable at the task level

This becomes especially important as agents become more common.

A single user request may trigger planning, tool use, retries, multiple model calls, and long output chains. If you only measure the top-level application bill, you will miss where the cost actually lives.

A practical checklist for leaders and architects

If you are scaling AI beyond a few isolated pilots, these are worth checking now:

- Can you attribute model spend to a team, workflow, or product owner?

- Can you distinguish prototype traffic from production traffic?

- Can you see which models are used where, and by whom?

- Do you know which workflows create the highest cost per useful outcome?

- Are your gateway or agent patterns preserving attribution, or collapsing it?

- Do platform, engineering, and finance have a shared view of what good AI spend looks like?

If the answer to most of those is no, the problem is probably not billing. It is architecture.

Bottom line

The interesting part of Bedrock’s new cost attribution feature is not just that AWS shipped another cloud control.

It is that enterprise AI has reached a stage where cost visibility needs to sit much closer to system design.

The teams that handle this well will not just manage spend better. They will make better model choices, govern agents more intelligently, and have a much easier time proving which AI workloads deserve to scale.

That is a much stronger position than finding out six months later that adoption is up, the bill is up, and nobody can say which part of the estate is actually worth it.