The Agentic Arms Race: Vulnerability Discovery at Scale

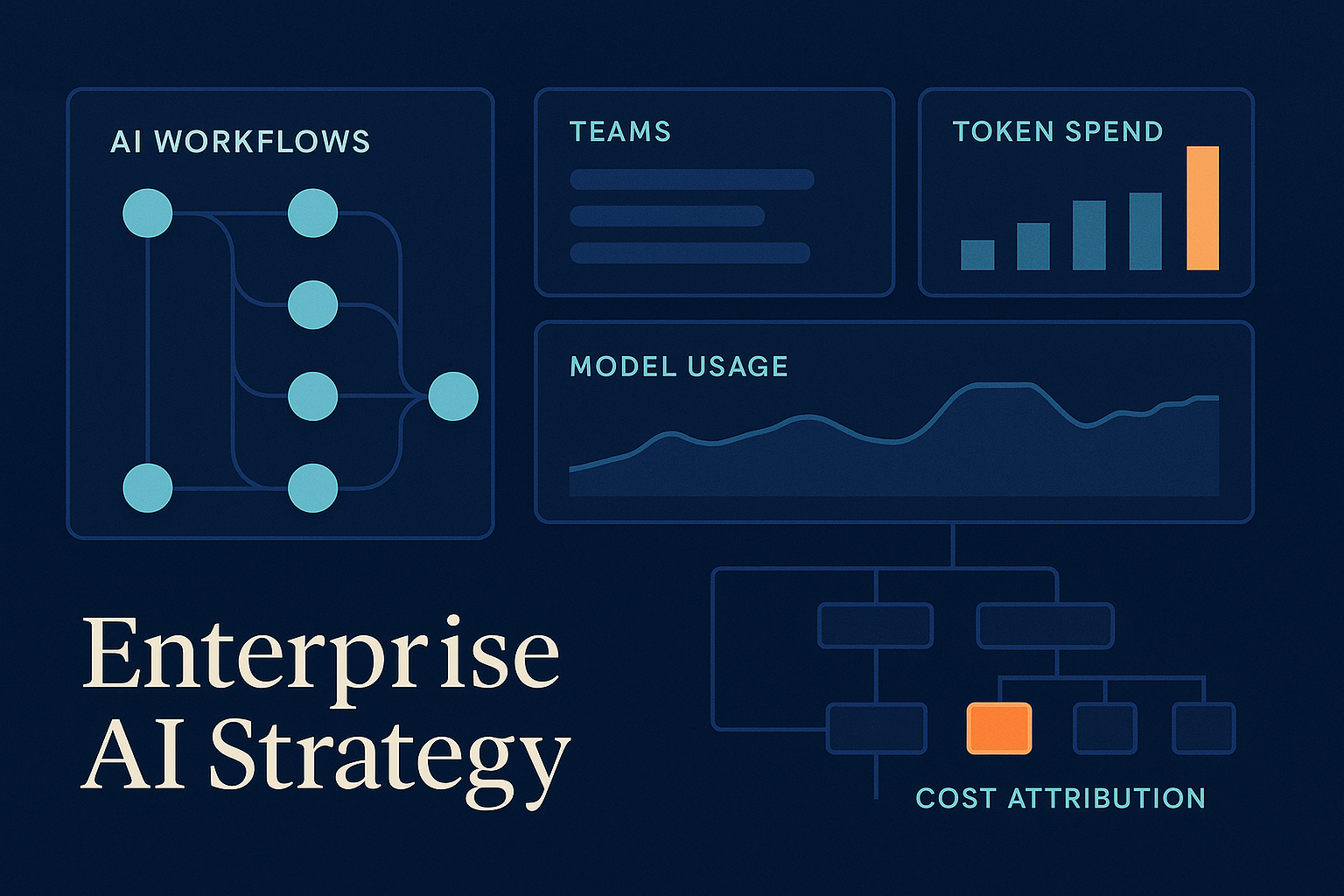

Intro The “security through obscurity” era is dead, killed by agents that can read code faster than humans can write it. This week’s synchronized releases from OpenAI, Anthropic, and Microsoft signal a fundamental shift: AI security is no longer about static scanners, but about adversarial agents locked in a permanent discovery loop. What happened Three major developments hit the wire simultaneously, focusing on “Agentic Security”: OpenAI launched the GPT-5.5 Bio Bug Bounty, offering $25,000 for a “universal jailbreak” of its biological safety layers. This isn’t just a contest; it’s a stress-test for model-level guardrails against high-severity misuse. Anthropic released Claude Security, a defensive tool using Claude Opus 4.7 to autonomously scan codebases, validate vulnerabilities, and—crucially—generate patches. Microsoft announced an AI-driven scanning harness for Azure, designed to automate the validation and prioritization of vulnerabilities based on real-world exploitability. Why it matters We are moving from “point-in-time” security audits to “continuous adversarial pressure.” If your defensive agents aren’t as capable as the offensive ones being tested in these bounties, your window of exposure shrinks to near zero. For enterprise leaders, this changes the “Builder’s Tax”—security is now a runtime cost of agentic operations, not a pre-deployment checkbox. ...